Highlights §

- This is part 2 of what to do when facing a limited amount of labeled data for supervised learning tasks. This time we will get some amount of human labeling work involved, but within a budget limit, and therefore we need to be smart when selecting which samples to label. (View Highlight)

- What is Active Learning?#

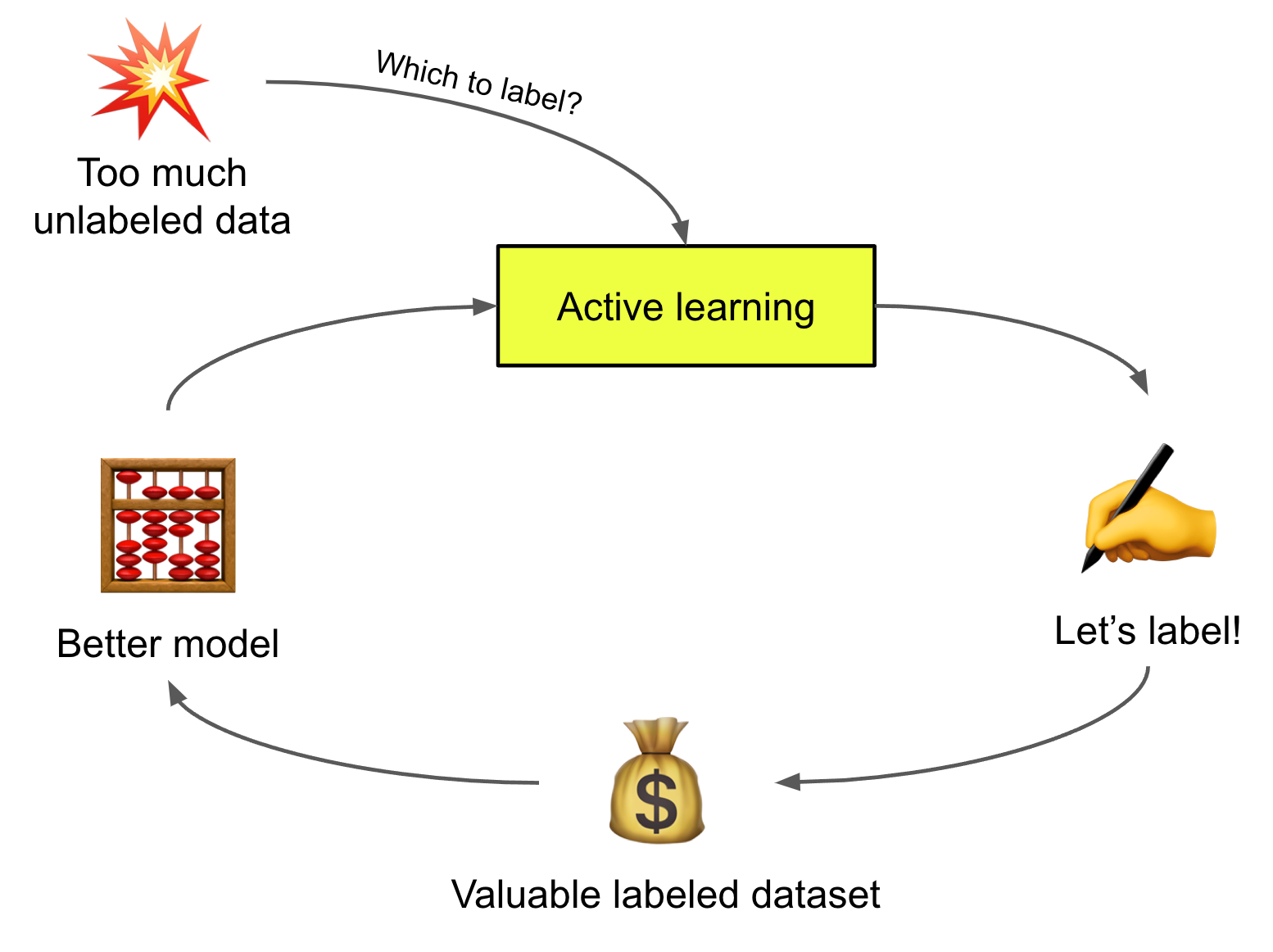

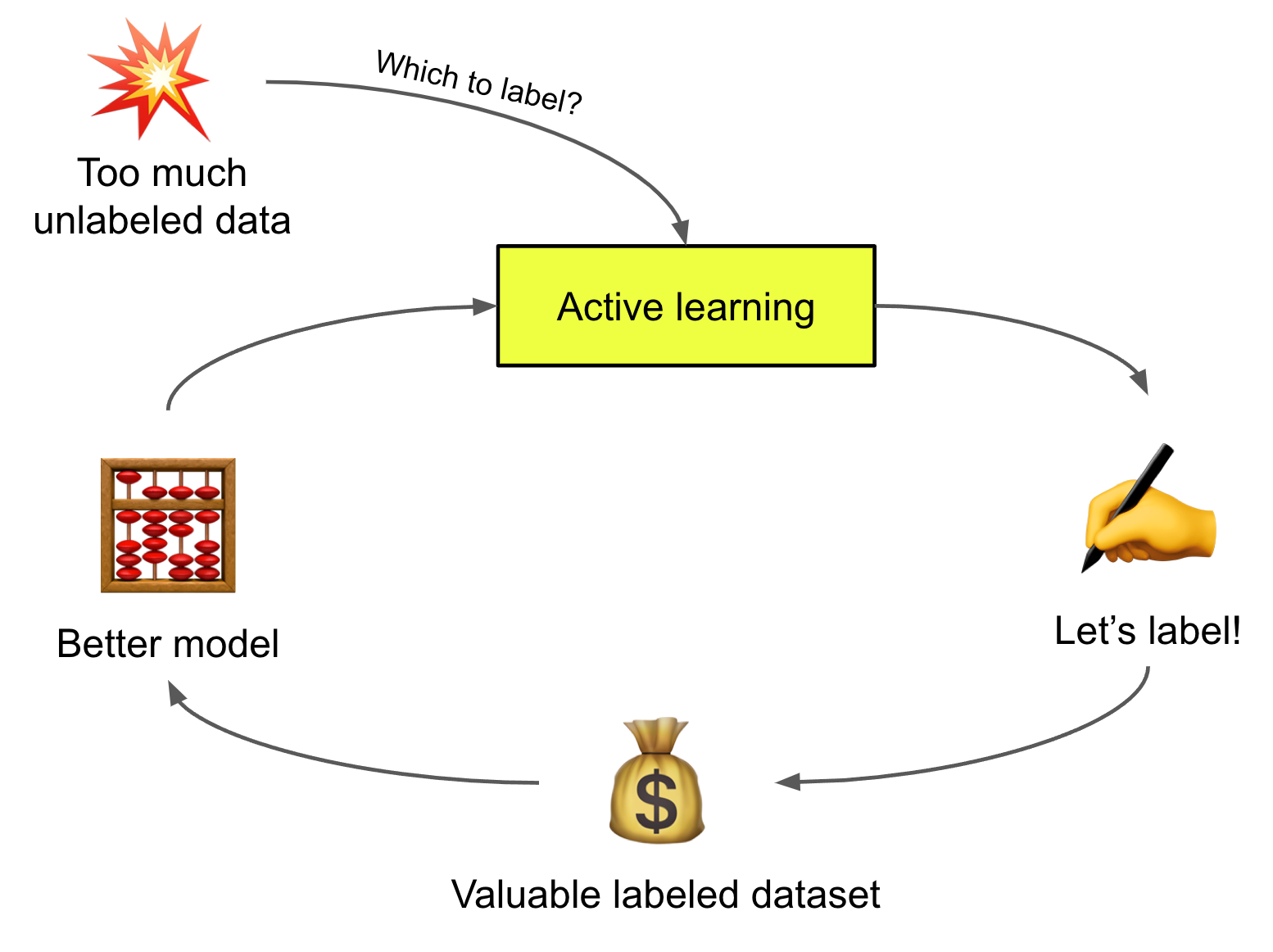

Given an unlabeled dataset U and a fixed amount of labeling cost B, active learning aims to select a subset of B examples from U to be labeled such that they can result in maximized improvement in model performance. This is an effective way of learning especially when data labeling is difficult and costly, e.g. medical images. This classical survey paper in 2010 lists many key concepts. While some conventional approaches may not apply to deep learning, discussion in this post mainly focuses on deep neural models and training in batch mode.

Fig. 1. Illustration of a cyclic workflow of active learning, producing better models more efficiently by smartly choosing which samples to label. (View Highlight)

Fig. 1. Illustration of a cyclic workflow of active learning, producing better models more efficiently by smartly choosing which samples to label. (View Highlight)

- Acquisition Function#

The process of identifying the most valuable examples to label next is referred to as “sampling strategy” or “query strategy”. The scoring function in the sampling process is named “acquisition function”, denoted as U(x). Data points with higher scores are expected to produce higher value for model training if they get labeled. (View Highlight)

- Uncertainty Sampling#

Uncertainty sampling selects examples for which the model produces most uncertain predictions. Given a single model, uncertainty can be estimated by the predicted probabilities, although one common complaint is that deep learning model predictions are often not calibrated and not correlated with true uncertainty well. In fact, deep learning models are often overconfident.

• Least confident score, also known as variation ratio: U(x)=1−Pθ(y^|x).

• Margin score: U(x)=Pθ(y^1|x)−Pθ(y^2|x), where y^1 and y^2 are the most likely and the second likely predicted labels.

• Entropy: U(x)=H(Pθ(y|x))=−∑y∈YPθ(y|x)logPθ(y|x).

Another way to quantify uncertainty is to rely on a committee of expert models, known as Query-By-Committee (QBC). QBC measures uncertainty based on a pool of opinions and thus it is critical to keep a level of disagreement among committee members. (View Highlight)

- Diversity Sampling#

Diversity sampling intend to find a collection of samples that can well represent the entire data distribution. Diversity is important because the model is expected to work well on any data in the wild, just not on a narrow subset. Selected samples should be representative of the underlying distribution. Common approaches often rely on quantifying the similarity between samples. (View Highlight)

- Expected Model Change#

Expected model change refers to the impact that a sample brings onto the model training. The impact can be the influence on the model weights or the improvement over the training loss (View Highlight)

- Hybrid Strategy#

Many methods above are not mutually exclusive. A hybrid sampling strategy values different attributes of data points, combining different sampling preferences into one. Often we want to select uncertain but also highly representative samples. (View Highlight)

New highlights added March 4, 2024 at 11:13 AM §

- Measuring Uncertainty#

The model uncertainty is commonly categorized into two buckets (Der Kiureghian & Ditlevsen 2009, Kendall & Gal 2017):

• Aleatoric uncertainty is introduced by noise in the data (e.g. sensor data, noise in the measurement process) and it can be input-dependent or input-independent. It is generally considered as irreducible since there is missing information about the ground truth.

• Epistemic uncertainty refers to the uncertainty within the model parameters and therefore we do not know whether the model can best explain the data. This type of uncertainty is theoretically reducible given more data (View Highlight)

- Ensemble and Approximated Ensemble#

There is a long tradition in machine learning of using ensembles to improve model performance. When there is a significant diversity among models, ensembles are expected to yield better results. This ensemble theory is proved to be correct by many ML algorithms; for example, AdaBoost aggregates many weak learners to perform similar or even better than a single strong learner. Bootstrapping ensembles multiple trials of resampling to achieve more accurate estimation of metrics. Random forests or GBM is also a good example for the effectiveness of ensembling. (View Highlight)

- To get better uncertainty estimation, it is intuitive to aggregate a collection of independently trained models. However, it is expensive to train a single deep neural network model, let alone many of them. (View Highlight)

- In active learning, a commoner approach is to use dropout to “simulate” a probabilistic Gaussian process (Gal & Ghahramani 2016). We thus ensemble multiple samples collected from the same model but with different dropout masks applied during the forward pass to estimate the model uncertainty (epistemic uncertainty). The process is named MC dropout (Monte Carlo dropout), where dropout is applied before every weight layer, is approved to be mathematically equivalent to an approximation to the probabilistic deep Gaussian process (Gal & Ghahramani 2016). This simple idea has been shown to be effective for classification with small datasets and widely adopted in scenarios when efficient model uncertainty estimation is needed. (View Highlight)

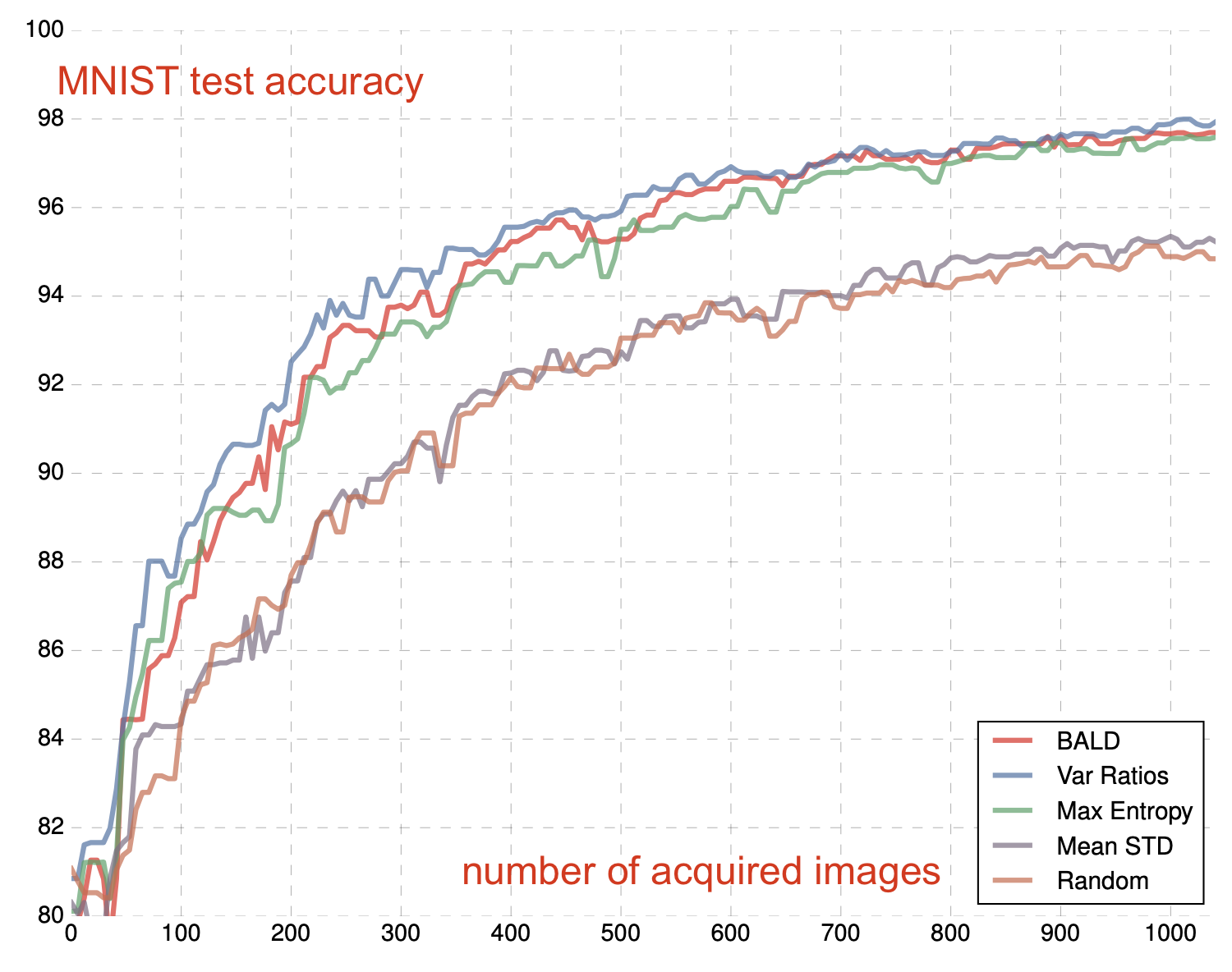

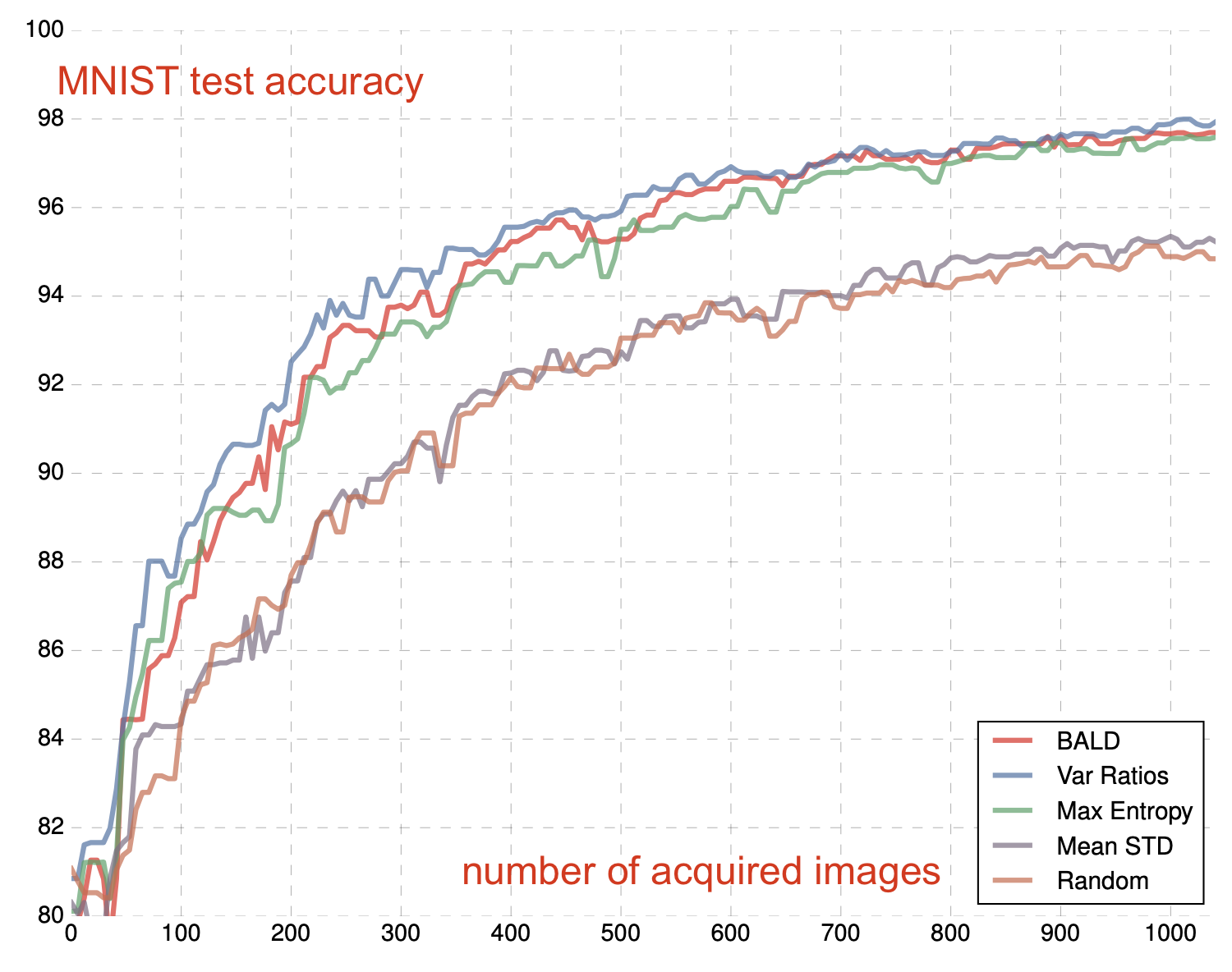

- DBAL (Deep Bayesian active learning; Gal et al. 2017) approximates Bayesian neural networks with MC dropout such that it learns a distribution over model weights. In their experiment, MC dropout performed better than random baseline and mean standard deviation (Mean STD), similarly to variation ratios and entropy measurement.

Fig. 2. Active learning results of DBAL on MNIST. (Image source: Gal et al. 2017). (View Highlight)

Fig. 2. Active learning results of DBAL on MNIST. (Image source: Gal et al. 2017). (View Highlight)

- Beluch et al. (2018) compared ensemble-based models with MC dropout and found that the combination of naive ensemble (i.e. train multiple models separately and independently) and variation ratio yields better calibrated predictions than others. However, naive ensembles are very expensive, so they explored a few alternative cheaper options:

• Snapshot ensemble: Use a cyclic learning rate schedule to train an implicit ensemble such that it converges to different local minima.

• Diversity encouraging ensemble (DEE): Use a base network trained for a small number of epochs as initialization for n different networks, each trained with dropout to encourage diversity.

• Split head approach: One base model has multiple heads, each corresponding to one classifier.

Unfortunately all the cheap implicit ensemble options above perform worse than naive ensembles. Considering the limit on computational resources, MC dropout is still a pretty good and economical choice. Naturally, people also try to combine ensemble and MC dropout (Pop & Fulop 2018) to get a bit of additional performance gain by stochastic ensemble. (View Highlight)

- Uncertainty in Parameter Space#

Bayes-by-backprop (Blundell et al. 2015) measures weight uncertainty in neural networks directly. The method maintains a probability distribution over the weights w, which is modeled as a variational distribution q(w|θ) since the true posterior p(w|D) is not tractable directly. (View Highlight)

- Loss Prediction#

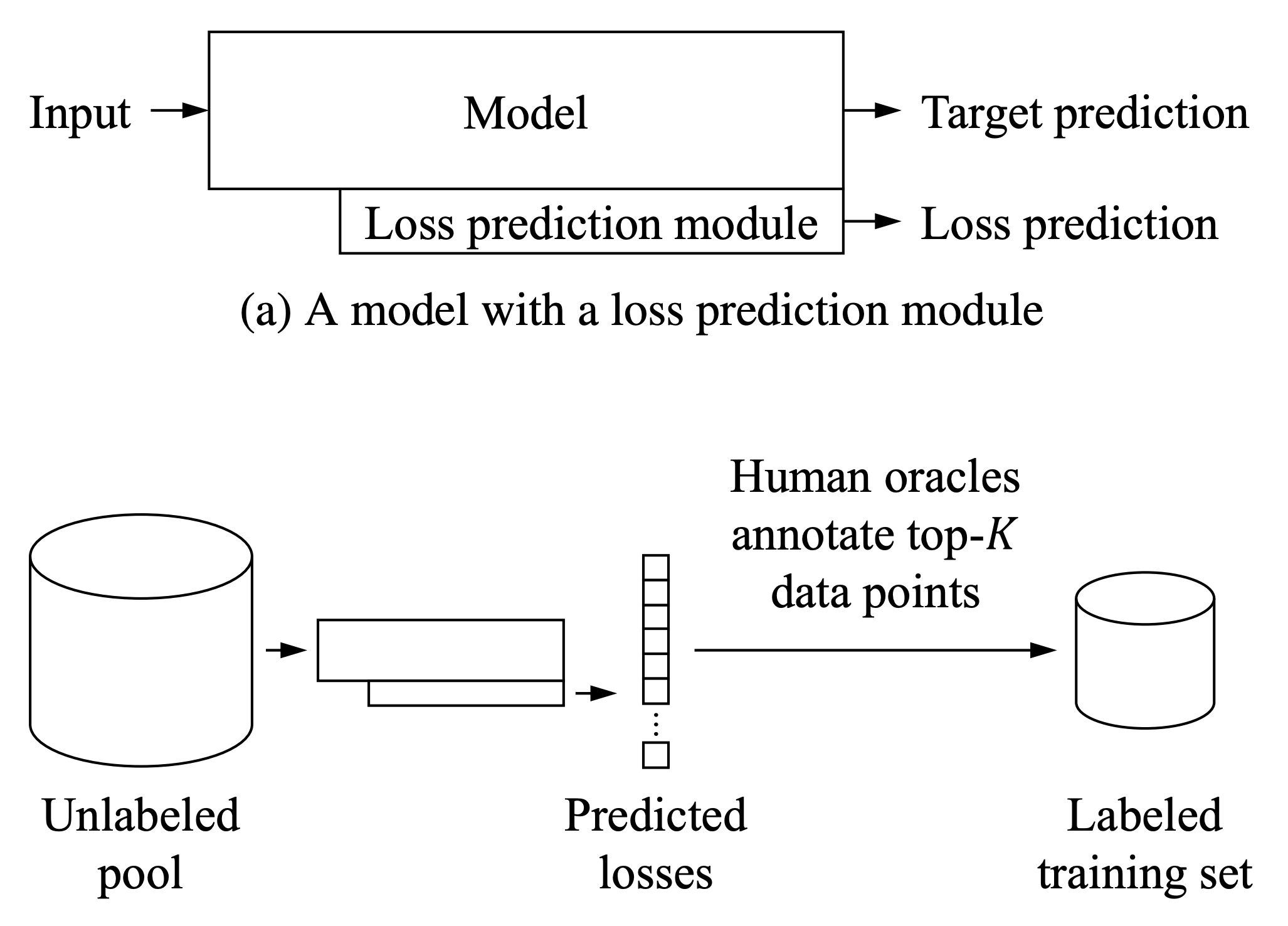

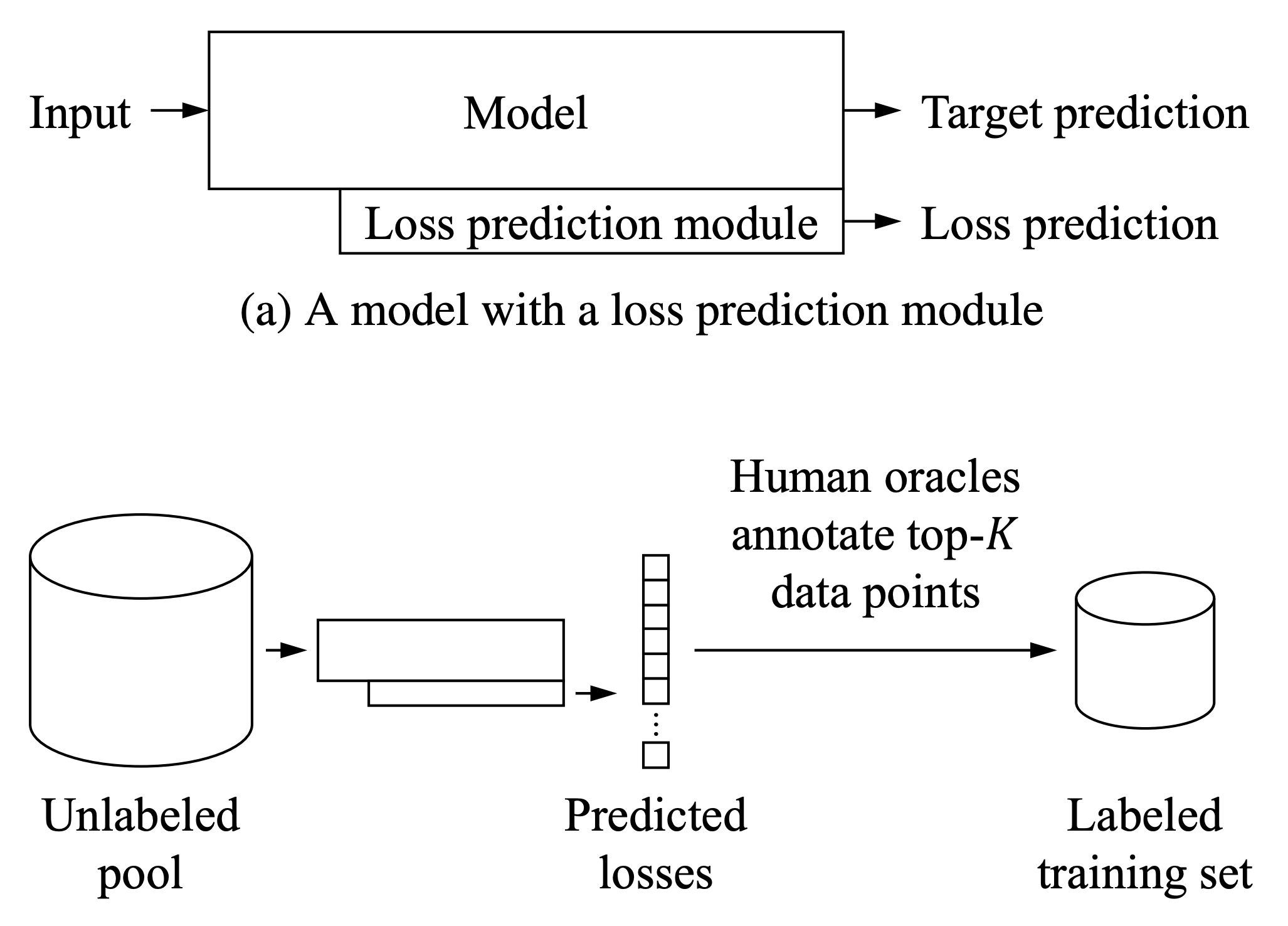

The loss objective guides model training. A low loss value indicates that a model can make good and accurate predictions. Yoo & Kweon (2019) designed a loss prediction module to predict the loss value for unlabeled inputs, as an estimation of how good a model prediction is on the given data. Data samples are selected if the loss prediction module makes uncertain predictions (high loss value) for them. The loss prediction module is a simple MLP with dropout, that takes several intermediate layer features as inputs and concatenates them after a global average pooling.

Fig. 3. Use the model with a loss prediction module to do active learning selection. (Image source: Yoo & Kweon 2019) (View Highlight)

Fig. 3. Use the model with a loss prediction module to do active learning selection. (Image source: Yoo & Kweon 2019) (View Highlight)

- Adversarial Setup#

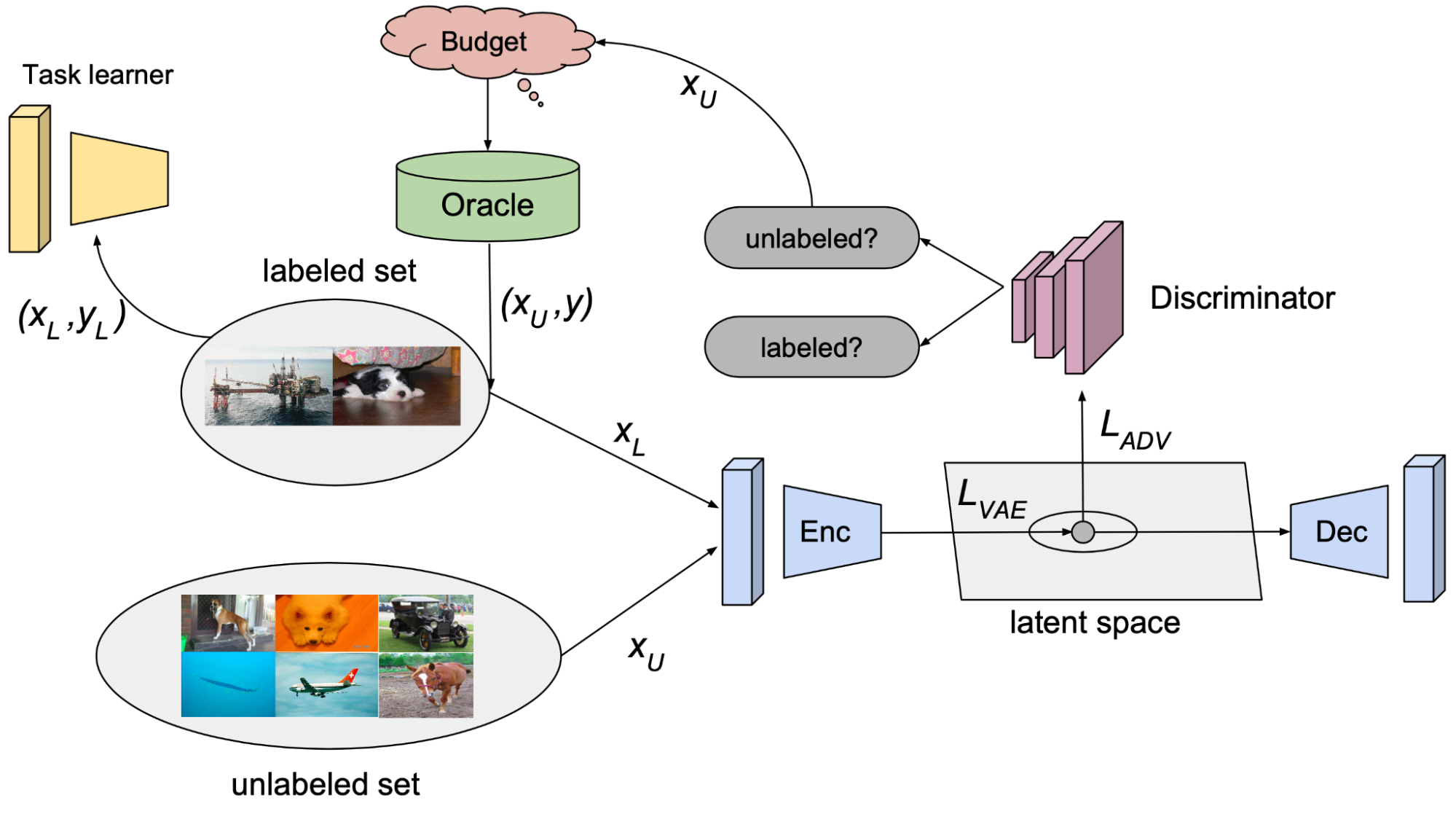

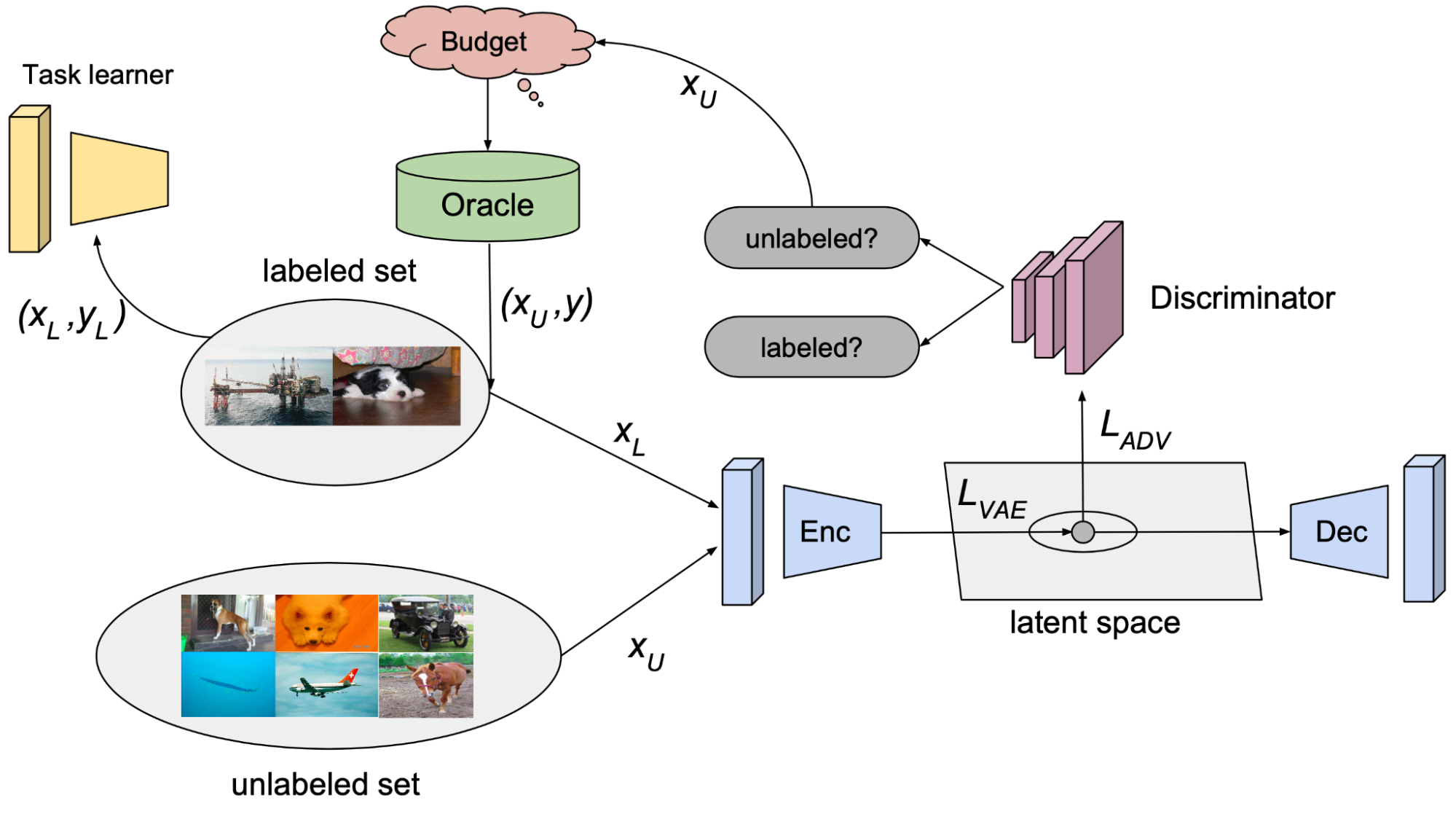

Sinha et al. (2019) proposed a GAN-like setup, named VAAL (Variational Adversarial Active Learning), where a discriminator is trained to distinguish unlabeled data from labeled data. Interestingly, active learning acquisition criteria does not depend on the task performance in VAAL.

Fig. 5. Illustration of VAAL (Variational adversarial active learning). (Image source: Sinha et al. 2019)

• The β-VAE learns a latent feature space zl∪zu, for labeled and unlabeled data respectively, aiming to trick the discriminator D(.) that all the data points are from the labeled pool;

• The discriminator D(.) predicts whether a sample is labeled (1) or not (0) based on a latent representation z. VAAL selects unlabeled samples with low discriminator scores, which indicates that those samples are sufficiently different from previously labeled ones. (View Highlight)

Fig. 5. Illustration of VAAL (Variational adversarial active learning). (Image source: Sinha et al. 2019)

• The β-VAE learns a latent feature space zl∪zu, for labeled and unlabeled data respectively, aiming to trick the discriminator D(.) that all the data points are from the labeled pool;

• The discriminator D(.) predicts whether a sample is labeled (1) or not (0) based on a latent representation z. VAAL selects unlabeled samples with low discriminator scores, which indicates that those samples are sufficiently different from previously labeled ones. (View Highlight)

- Core-sets Approach#

A core-set is a concept in computational geometry, referring to a small set of points that approximates the shape of a larger point set. Approximation can be captured by some geometric measure. In the active learning, we expect a model that is trained over the core-set to behave comparably with the model on the entire data points.

Sener & Savarese (2018) treats active learning as a core-set selection problem. Let’s say, there are N samples in total accessible during training. During active learning, a small set of data points get labeled at every time step t, denoted as S(t). The upper bound of the learning objective can be written as follows, where the core-set loss is defined as the difference between average empirical loss over the labeled samples and the loss over the entire dataset including unlabelled ones. (View Highlight)

- It works well on image classification tasks when there is a small number of classes. When the number of classes grows to be large or the data dimensionality increases (“curse of dimensionality”), the core-set method becomes less effective (Sinha et al. 2019).

Because the core-set selection is expensive, Coleman et al. (2020) experimented with a weaker model (e.g. smaller, weaker architecture, not fully trained) and found that empirically using a weaker model as a proxy can significantly shorten each repeated data selection cycle of training models and selecting samples, without hurting the final error much. Their method is referred to as SVP (Selection via Proxy). (View Highlight)

- Diverse Gradient Embedding#

BADGE (Batch Active learning by Diverse Gradient Embeddings; Ash et al. 2020) tracks both model uncertainty and data diversity in the gradient space. Uncertainty is measured by the gradient magnitude w.r.t. the final layer of the network and diversity is captured by a diverse set of samples that span in the gradient space.

• Uncertainty. Given an unlabeled sample x, BADGE first computes the prediction y^ and the gradient gx of the loss on (x,y^) w.r.t. the last layer’s parameters. They observed that the norm of gx conservatively estimates the example’s influence on the model learning and high-confidence samples tend to have gradient embeddings of small magnitude.

• Diversity. Given many gradient embeddings of many samples, gx, BADGE runs k-means++ to sample data points accordingly. (View Highlight)

- Measuring Training Effects#Quantify Model Changes#

Settles et al. (2008) introduced an active learning query strategy, named EGL (Expected Gradient Length). The motivation is to find samples that can trigger the greatest update on the model if their labels are known. (View Highlight)

- BALD (Bayesian Active Learning by Disagreement; Houlsby et al. 2011) aims to identify samples to maximize the information gain about the model weights, that is equivalent to maximize the decrease in expected posterior entropy. (View Highlight)

- The underlying interpretation is to “seek x for which the model is marginally most uncertain about y (high H(y|x,D)), but for which individual settings of the parameters are confident (low H(y|x,θ)).” In other words, each individual posterior draw is confident but a collection of draws carry diverse opinions. (View Highlight)

- Forgetting Events#

To investigate whether neural networks have a tendency to forget previously learned information, Mariya Toneva et al. (2019) designed an experiment: They track the model prediction for each sample during the training process and count the transitions for each sample from being classified correctly to incorrectly or vice-versa. Then samples can be categorized accordingly,

• Forgettable (redundant) samples: If the class label changes across training epochs.

• Unforgettable samples: If the class label assignment is consistent across training epochs. Those samples are never forgotten once learned.

They found that there are a large number of unforgettable examples that are never forgotten once learnt. Examples with noisy labels or images with “uncommon” features (visually complicated to classify) are among the most forgotten examples. The experiments empirically validated that unforgettable examples can be safely removed without compromising model performance.

In the implementation, the forgetting event is only counted when a sample is included in the current training batch; that is, they compute forgetting across presentations of the same example in subsequent mini-batches. The number of forgetting events per sample is quite stable across different seeds and forgettable examples have a small tendency to be first-time learned later in the training. The forgetting events are also found to be transferable throughout the training period and between architectures.

Forgetting events can be used as a signal for active learning acquisition if we hypothesize a model changing predictions during training is an indicator of model uncertainty. However, ground truth is unknown for unlabeled samples. Bengar et al. (2021) proposed a new metric called label dispersion for such a purpose. (View Highlight)

- Hybrid#

When running active learning in batch mode, it is important to control diversity within a batch. Suggestive Annotation (SA; Yang et al. 2017) is a two-step hybrid strategy, aiming to select both high uncertainty & highly representative labeled samples. It uses uncertainty obtained from an ensemble of models trained on the labeled data and core-sets for choosing representative data samples.

- First, SA selects top K images with high uncertainty scores to form a candidate pool Sc⊆SU. The uncertainty is measured as disagreement between multiple models training with bootstrapping.

- The next step is to find a subset Sa⊆Sc with highest representativeness. The cosine similarity between feature vectors of two inputs approximates how similar they are. The representativeness of Sa for SU reflects how well Sa can represent all the samples in Su, defined as: (View Highlight)

- Zhdanov (2019) runs a similar process as SA, but at step 2, it relies on k-means instead of core-set, where the size of the candidate pool is configured relative to the batch size. Given batch size b and a constant beta (between 10 and 50), it follows these steps:

- Train a classifier on the labeled data;

- Measure informativeness of every unlabeled example (e.g. using uncertainty metrics);

- Prefilter top βb≥b most informative examples;

- Cluster βb examples into B clusters;

- Select b different examples closest to the cluster centers for this round of active learning.

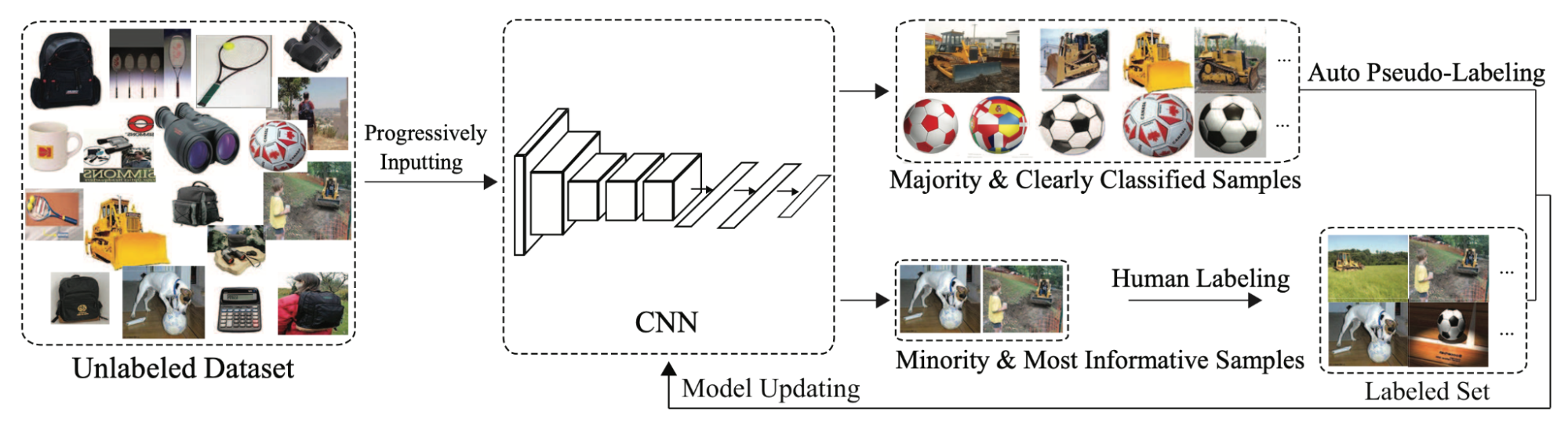

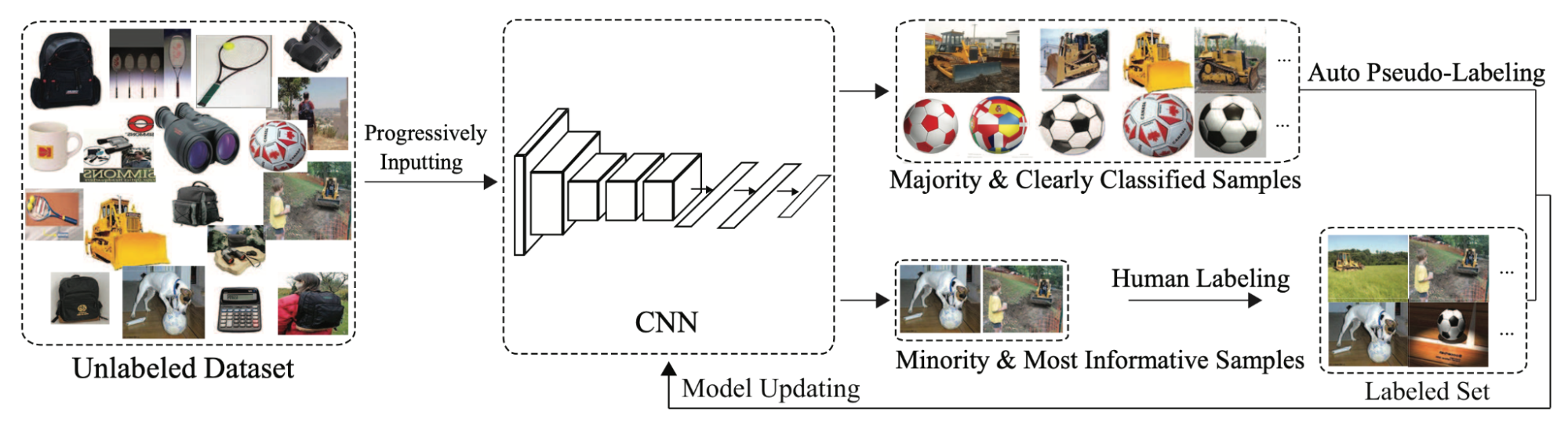

Active learning can be further combined with semi-supervised learning to save the budget. CEAL (Cost-Effective Active Learning; Yang et al. 2017) runs two things in parallel:

- Select uncertain samples via active learning and get them labeled;

- Select samples with the most confident prediction and assign them pseudo labels. The confidence prediction is judged by whether the prediction entropy is below a threshold δ. As the model is getting better in time, the threshold δ decays in time as well.

Fig. 12. Illustration of CEAL (cost-effective active learning). (Image source: Yang et al. 2017) (View Highlight)

Fig. 12. Illustration of CEAL (cost-effective active learning). (Image source: Yang et al. 2017) (View Highlight)

Fig. 1. Illustration of a cyclic workflow of active learning, producing better models more efficiently by smartly choosing which samples to label. (View Highlight)

Fig. 1. Illustration of a cyclic workflow of active learning, producing better models more efficiently by smartly choosing which samples to label. (View Highlight) Fig. 2. Active learning results of DBAL on MNIST. (Image source: Gal et al. 2017). (View Highlight)

Fig. 2. Active learning results of DBAL on MNIST. (Image source: Gal et al. 2017). (View Highlight) Fig. 3. Use the model with a loss prediction module to do active learning selection. (Image source: Yoo & Kweon 2019) (View Highlight)

Fig. 3. Use the model with a loss prediction module to do active learning selection. (Image source: Yoo & Kweon 2019) (View Highlight) Fig. 5. Illustration of VAAL (Variational adversarial active learning). (Image source: Sinha et al. 2019)

• The β-VAE learns a latent feature space zl∪zu, for labeled and unlabeled data respectively, aiming to trick the discriminator D(.) that all the data points are from the labeled pool;

• The discriminator D(.) predicts whether a sample is labeled (1) or not (0) based on a latent representation z. VAAL selects unlabeled samples with low discriminator scores, which indicates that those samples are sufficiently different from previously labeled ones. (View Highlight)

Fig. 5. Illustration of VAAL (Variational adversarial active learning). (Image source: Sinha et al. 2019)

• The β-VAE learns a latent feature space zl∪zu, for labeled and unlabeled data respectively, aiming to trick the discriminator D(.) that all the data points are from the labeled pool;

• The discriminator D(.) predicts whether a sample is labeled (1) or not (0) based on a latent representation z. VAAL selects unlabeled samples with low discriminator scores, which indicates that those samples are sufficiently different from previously labeled ones. (View Highlight) Fig. 12. Illustration of CEAL (cost-effective active learning). (Image source: Yang et al. 2017) (View Highlight)

Fig. 12. Illustration of CEAL (cost-effective active learning). (Image source: Yang et al. 2017) (View Highlight)